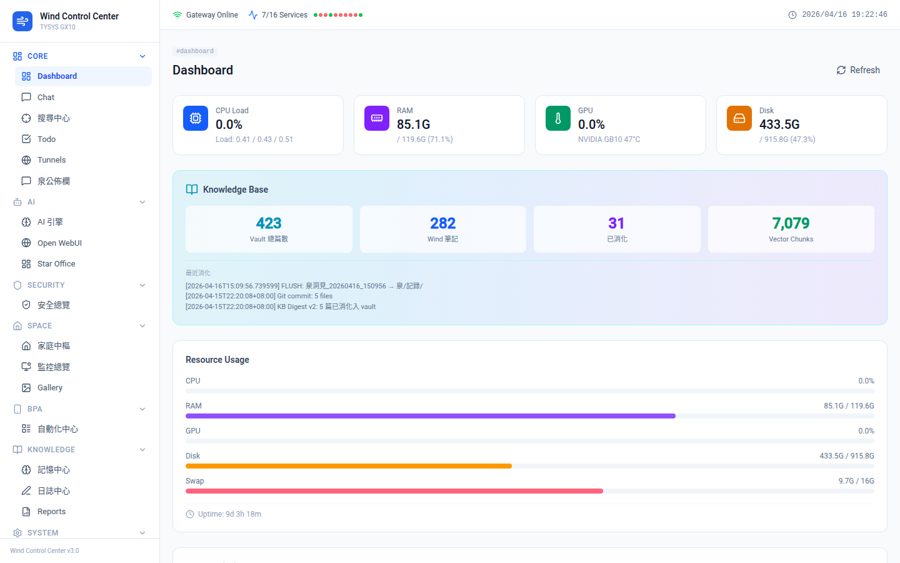

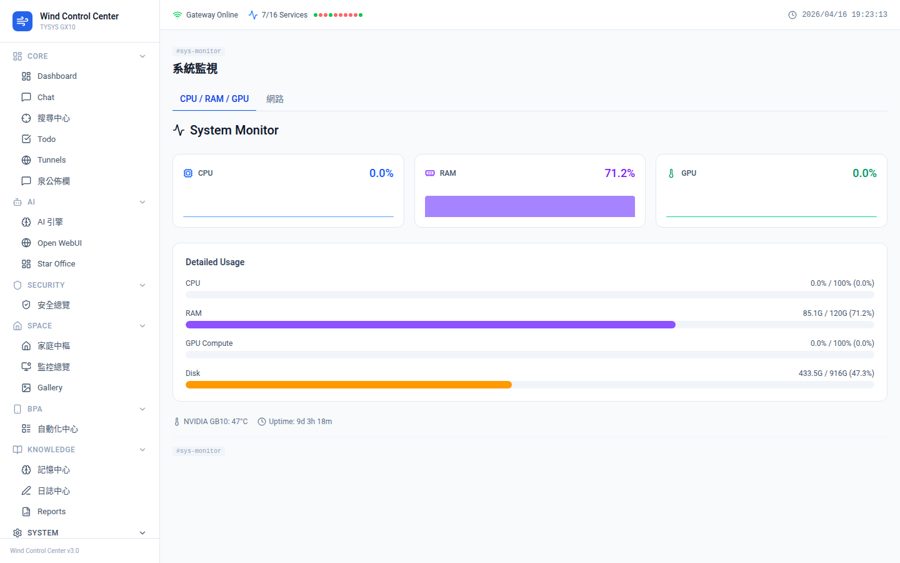

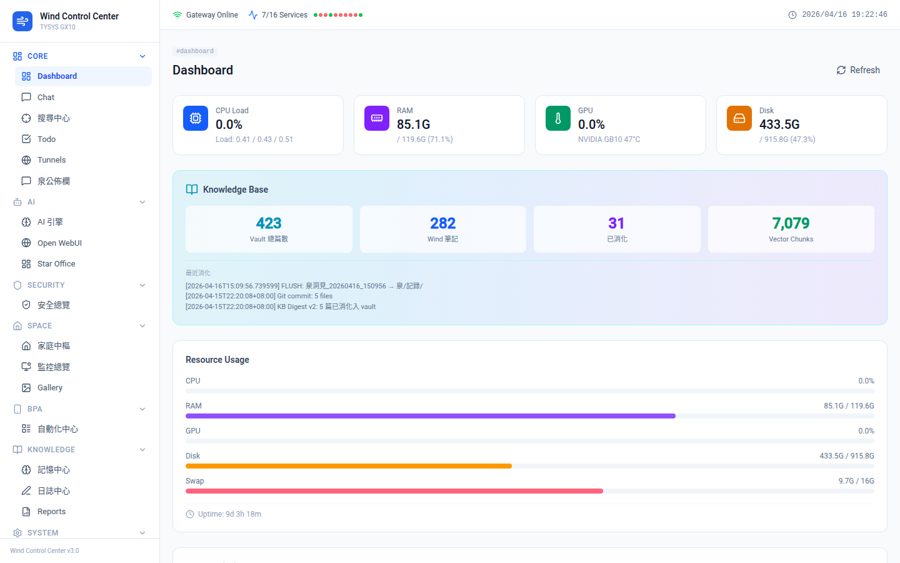

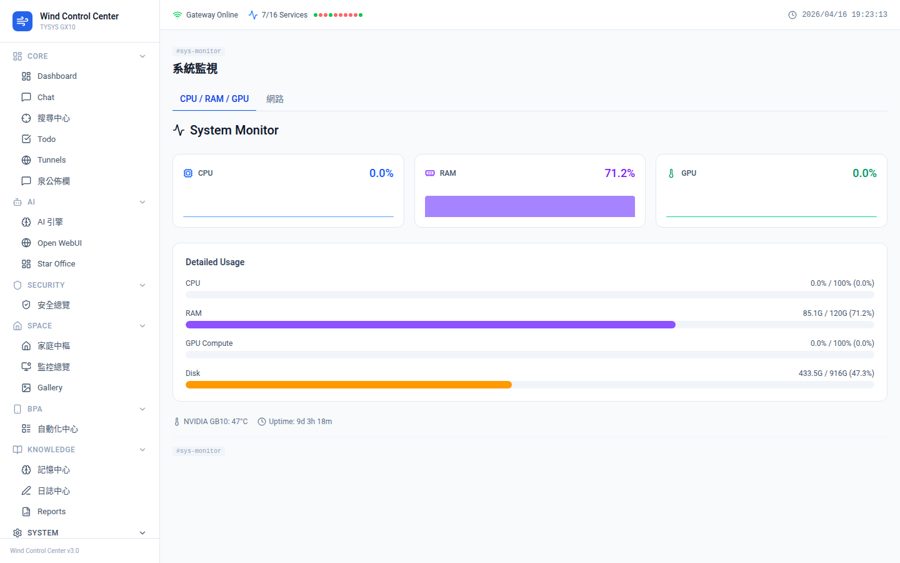

This isn't a demo environment.

This is what I run daily.

GX10 self-built GPU server. 14 models. 22 services. The same infrastructure I use to build your project.

AI Deployment Engineer based in Taiwan. I run 14 LLMs on a self-hosted GPU server with 22 production services live 24/7. I build systems that work in the real world — not just in demos.

Every project is deployed on real infrastructure and tested against actual workflows — not mocked up for a presentation.

GX10 self-built GPU server. 14 models. 22 services. The same infrastructure I use to build your project.

Not a buzzword list. These are the technologies running in production on my server right now.

Most AI developers wrap an API call. I run the infrastructure. 14 LLMs on a self-hosted GPU server means I understand what breaks in production, not just what works in a Jupyter notebook. I've built systems that are still running 6 months later — not demos that get shelved.

Yes — it's actually my specialty. I build on-premise and hybrid deployments where your data never leaves your server. No sending documents to OpenAI. Your SOPs, customer data, and internal knowledge stay on your infrastructure.

From focused builds (a RAG knowledge base over your documents, 2–3 weeks) to full AI infrastructure setups (hybrid architecture + automation pipelines + monitoring, 2–3 months). I prefer scoped projects with clear deliverables over open-ended retainers.

Manufacturing (DXF laser cutting quoting), textile/fashion (AI pattern generation + virtual try-on), logistics, and business process automation across multiple SMEs in Taiwan. The problems are different; the architecture patterns are often the same.

UTC+8 (Taiwan). Available for async collaboration with any timezone. For real-time calls: overlap easily with Singapore, Japan, Australia, and partially with Europe (morning). US projects work well async with daily updates.

Tell me the problem. I'll tell you if AI can solve it — and if it can, I'll build it so it keeps running.